12 agents, 5 layers, zero hiring: how we built an in-house AI dev team

Over a year ago, in January 2025, we wrote our first prompt in Claude Code. "Write us an email validation function." It worked. Cool. A curiosity.

Today we have 12 AI agents across five layers. A full pipeline: from strategic review of a new idea, through implementation, code review, security audit, testing, SEO optimization, analytics verification, to deployment on a Kubernetes cluster. Without a single job interview. Without onboarding. Without daily standups.

Over 15 months. That's how long it took to go from "write us a function" to "manage a twelve-agent team on production infrastructure." Two sprints in a large enterprise organization.

And no — this isn't a story about replacing people. This is a story about the most expensive resource in a software house not being called "developer." It's called context.

The problem: glue that slows everything down

Writing code accounts for maybe 30% of the work on a project in a software house. The rest? It's "glue": a multi-stage Dockerfile, RBAC and other DevOps stuff, code review, security audit, E2E tests, GTM configuration, meta tag verification, post-deploy smoke tests. Don't forget client meetings and project management!

Each of these requires context:

Knowledge of conventions

Project history

Service dependencies.

Each is a bottleneck — because code review waits for a free slot. Security audits happen once a quarter, not on every change. SEO gets checked "at the end, if there's time."

This isn't a code problem. It's a context management problem at scale.

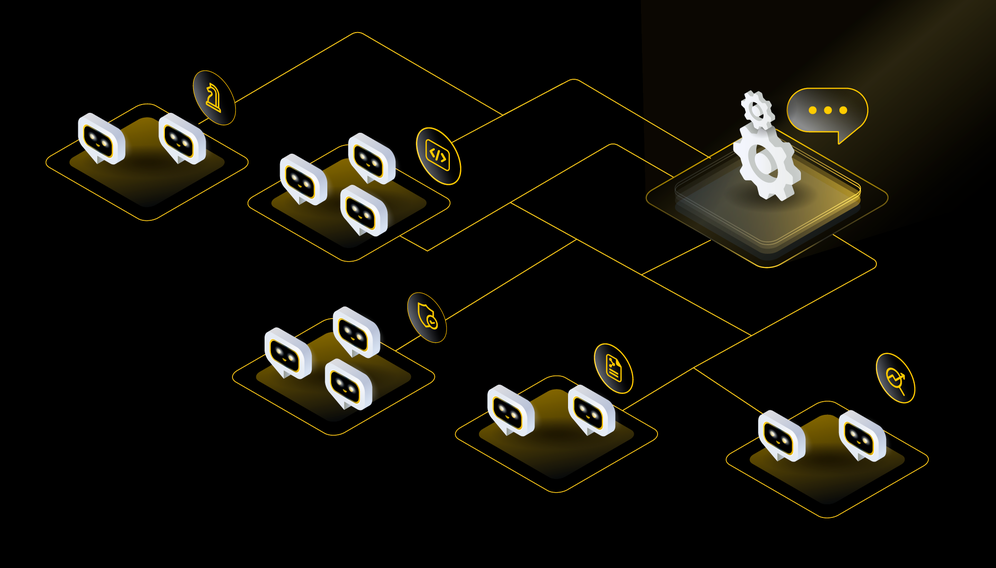

Architecture: 12 agents across 4 layers

We designed bee-team as an answer to this specific problem. Not "because AI is cool." Because the overhead around code costs more than the code itself.

Layer 0 — Strategic Advisory

Visionar

evaluates new ideas from the perspective of market trends, innovation, and business potential. Looks at the "demo factor" — can you show this off? Can you write an article about it? Will developers want to build it?

Pragmatist

deliberately contrasts. Evaluates risk, technology maturity (TRL 1-9), costs and timelines. Asks: "Is there a simpler solution that delivers 80% of the value at 20% of the cost?"

This intentional contrast — optimism versus realism — forces decisions based on arguments, not enthusiasm. Layer 0 only activates for new features, not bugfixes.

Layer 1 — Core Development

PM

breaks down briefs into tasks, coordinates agents, manages the pipeline, logs architecture decisions, and reports. Knows the entire human team and when to escalate.

Backend Developer

breaks down briefs into tasks, coordinates agents, manages the pipeline, logs architecture decisions, and reports. Knows the entire human team and when to escalate.

Frontend Developer

builds Next.js, Vite, integrates Storyblok. Knows that output: 'standalone' is mandatory for Docker on our cluster. Knows our routing patterns and Tailwind design tokens.

Is your e-commerce holding back your growth?

See how our enterprise-class solutions eliminate technical debt and boost conversion rates!

Layer 2 — Quality & Security

Code Reviewer

checks OWASP Top 10, SOLID, TypeScript strict mode — instantly, on every change. It's read-only: reads, reports, doesn't edit code. If it finds a problem — it loops back to the developer with specific feedback.

QA Tester

verifies acceptance criteria, writes E2E tests, runs smoke tests on K8s after deploy. Checks for CrashLoopBackOff, probe health, ingress response.

Security Enginee

audits OWASP Top 10, container security (non-root, alpine, .dockerignore), K8s security context, verifies all secrets flow through ExternalSecrets. Not once a quarter — on every MVP.

Layer 3 — Optimization & Delivery

DevOps K8s

knows our entire pipeline: image build → push to registry → tag update in values.yaml → ArgoCD sync. Knows Helm charts, values.yaml, ExternalSecrets, RBAC, and 1Password integration. Knows GitOps does not forgive manual fixes, because ArgoCD will roll them back anyway. Also knows the cluster constraints enforced by Kyverno.

Performance Engineer

monitors Core Web Vitals (LCP < 2.5s, INP < 200ms, CLS < 0.1), analyzes bundle size, application startup time, resource requests, and limits. Checks resource quotas against Kyverno, probes, and rollout stability. Ensures new deploys do not break what is already working.

Layer 4 — SEO & Analytics

SEO Specialist

audits meta tags (title 50-60 chars, description 150-160), heading hierarchy H1-H6, robots.txt, sitemap, Schema.org JSON-LD, hreflang for multilingual versions. Read-only — reports findings, doesn't fix code.

Web Analyst

verifies GTM (snippet in head + noscript), GA4 (Measurement ID, Enhanced Measurement), dataLayer (reset before routeChange in SPAs!), custom events. Checks firing rates, identifies 0% on critical triggers.

Layer 4 activates only for frontend changes. Pure backend? Skip.

Pipeline: how it works together

Phase 0: Visionary + Pragmatist → strategic review (new features only)

Phase A: Backend + Frontend → implementation (parallel)

Phase B: Code Review → fix loop until APPROVED

Phase C: Security + Performance + QA → audit, E2E, acceptance criteria (parallel)

Phase D: SEO + Web Analyst → frontend quality (frontend only)

Phase E: DevOps K8s → deploy to cluster

→ PM Final ReportParallelism is key. Visionary and Pragmatist work simultaneously — deliberately independent, so they don't influence each other. Backend and Frontend start together. Security, Performance, and QA launch at the same time.

Quality gates enforce ordering: Code Review must pass before Security starts. SEO doesn't fire for pure backend. Deploy is always last.

Every agent has a human owner and backup from the team. When an agent doesn't know — it escalates to a human, it doesn't guess.

Project context architecture

Agents don't know project specifics. They're technologically agnostic — their definitions contain no repo names, URLs, or GTM IDs. Why? Because the same team of 12 agents serves dozens of projects.

Each project has its own context file with repo, URLs, specific rules, and analytics identifiers. The PM reads the context at kickoff and distributes the path to all agents. Zero copy-paste. Zero onboarding.

New project? Write a context file — and the entire team knows it. Like a new hire gets a wiki, an agent gets context. Except the agent reads it in a second, not a week.

What's next?

Over a year ago there was no MCP. Six months ago there were no subagents. Three months ago there were no Managed Agents. Today — 12 agents with SEO and analytics in a full pipeline.

We don't know what comes next year. And that's the most honest answer I can give. Because if in January 2025 we'd said "in a year I'll have 12 agents with a strategic layer and analytics on Kubernetes" — nobody would have believed us.

But one thing we know: the question "will AI replace developers" is poorly framed.

The right question: how much of a developer's work is actually context management? Reading documentation. Checking conventions. Verifying that the Helm chart has the right targetPort. Making sure the dataLayer resets before a new event in a SPA.

An agent doesn't write better code than a senior. But a senior writes better code when the agent handles review, testing, SEO, analytics, and deployment. This isn't replacing legs — it's an exoskeleton that lets you run faster.

We're not building AI to replace the team. We're building AI so the team does what it does best — think, design, and make decisions that require business context, empathy, and experience.

And that Helm chart? It'll check it for you. Instantly.

Let's talk about potential areas of collaboration!

Hi!

during the first consultation we'll analyze your goals through the lens of ROI and operational risk. Whether we're building an Enterprise system, an application, or an AI automation — together we'll plan an architecture that eliminates your technical debt and unlocks scalability.

You can find more articles on this topic on our blog

Agentic Commerce: How to Prepare Your E-commerce Business for the Era of Autonomous AI Shopping?

Agentic Commerce is revolutionizing shopping. Learn how to prepare your e-commerce business for the era of autonomous AI agents by optimizing data, APIs, and headless architecture. See how BeeCommerce can help you!

8 min

Read more

Agentic AI: How Autonomous Shopping Agents Are Potentially Changing E-commerce

Discover how Agentic AI is changing e-commerce by becoming your personal advisor. See how hyper-personalization and artificial intelligence automation merge in AI Agents.

6 min

Read more

The 12-Month Window: How to Seize the Moment for AI Innovation in E-commerce?

The "12-Month Window" concept in e-commerce refers to a crucial, often short, period during which the implementation of a new technology, such as artificia

5 min

Read more